|

One of the major abstractions in AWS Glue is the DynamicFrame, which is similar to the DataFrame construct found in SparkSQL and Pandas.

For each Glue ETL Job, a new Spark environment is created, which is protected by an IAM role, a VPC, a subnet and a security group. AWS Glue runs these jobs on virtual resources that it provisions and manages in its own service account. When resources are required, to reduce startup time, AWS Glue uses an instance from its warm pool of instances to run your workload.ĪWS Glue runs your ETL jobs in an Apache Spark serverless environment. The AWS Glue console connects these services into a managed application, so you can focus on creating and monitoring your ETL work. It works well with structured and semi-structured data and has an intuitive console to discover and transform the data, using Spark, Python or Scala.ĪWS Glue calls API operations to transform your data, create runtime logs, store your job logic, and create notifications to help you monitor your job runs. AWS Glue makes it cost-effective to categorize your data, clean it, enrich it, and move it reliably between various data stores and data streams. What is AWS Glue?Īs mentioned above, AWS Glue is a fully managed, serverless environment where you can extract, transform, and load (ETL) your data. Since this is such a key process in any data platform, Amazon Web Services has introduced its own fully managed service, that does just this. That is exactly what your ETL process should do. With a bit of luck, you can get a copy of that kid’s summary. They add the extra comments from the professor (that you don’t have) and they structure the material into lists, bullet points and tables. They take the different textbooks, presentations and exercises as input, extract all the necessary parts of the course from this (and ignore what is not needed for the exam). You partied the whole year and didn’t take notes during classes, and now you have to study all this stuff, which contains a lot of unnecessary information, in just one day… I see the ETL process as the smart, orderly kid in your class, who went to every lesson and made notes and summaries of the material. You probably remember being (or still are) in school, having to prepare for an exam and having a big load of study material spread out over textbooks, presentations and exercises.

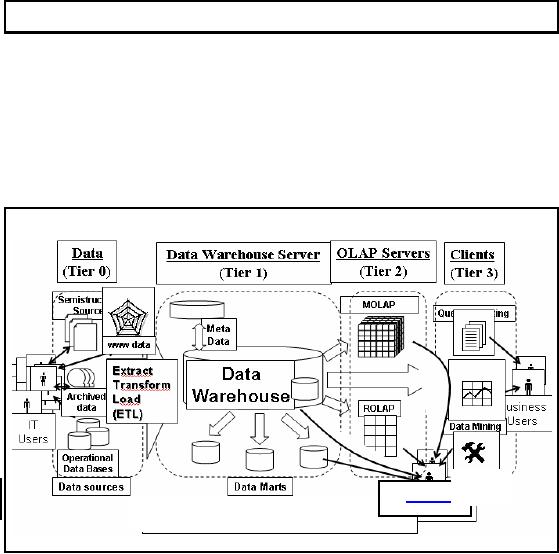

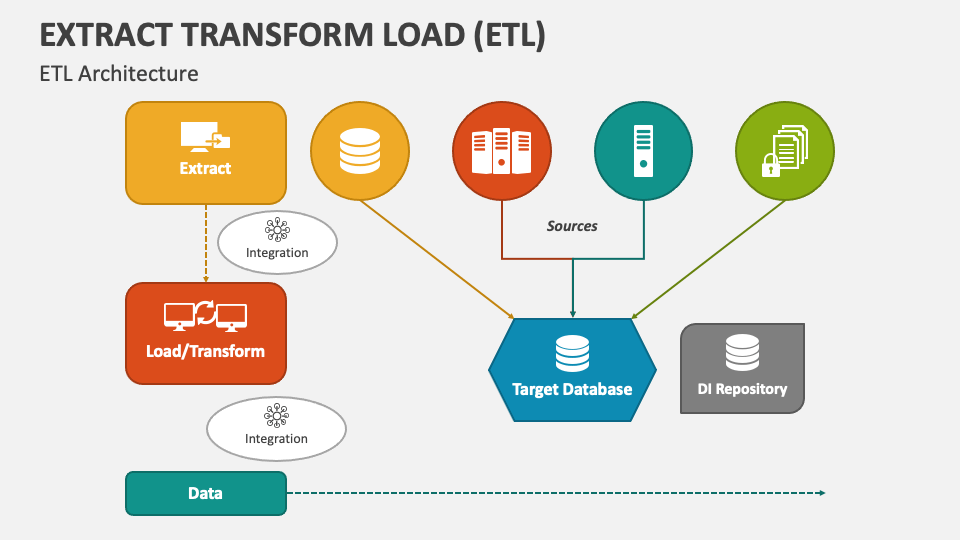

As you can derive from its name, it is a process that consists of 3 steps: Extract the data from the source, Transform the data in order to structure and clean it & Load the data into the right place to make it easy accessible for analytics & reporting. As you can see above, the ETL process serves as a bridge between the multiple, raw data inputs and the clean, structured and interpretable output.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed